Jupyter notebooks can be powerful tools to connect to your remote database. They allow you to streamline, replicate, and document your data. Python Database (DB) APIs are compatible with various databases, and in particular, Python supports relational database systems. In this tutorial, using a Jupyter notebook, we will briefly see how to connect to a PostgreSQL database, which is a popular open-source relational database, and how to make queries in a Jupyter Notebook using Python language.

Before we begin, we need a PostgreSQL database installed on our machine. For a free download of the PostgreSQL server on your local machine and installation instructions, please see https://www.postgresql.org/download/ and https://www.postgresqltutorial.com/postgresql-getting-started/. During the installation, you will be required to create a password. Please make a note of it so that you will use it below to connect to your database in your notebook.

For this notebook, I will show a few simple queries on the sample database dvdrental. You can use any other database for the queries. In case you want to use dvdrental, you can download the database here: https://www.postgresqltutorial.com/postgresql-getting-started/postgresql-sample-database/

The Python DB API implementation for PostgreSQL is “psycopg2”. During the execution of the steps below, you might need to install a psycopg2-related module such as psycopg2-binary. In case your code complains with the error code “ModuleNotFound”, please pip install the missing module in your console. Let’s start by following the steps below:

1- First, make sure that SQLAlchemy Python module is installed and imported. SQLAlchemy is a library used to interact with a wide variety of databases. It works with a DB driver which is “psycopg2” for PostgreSQL. SQLAlchemy generates SQL statements, and psycopg2 communicates with the database.

import sqlalchemy

2- To connect to the database, we need to create a postgresql engine. To create the engine, the syntax is as below:

- engine = sqlalchemy.create_engine(‘postgresql://postgres:your_password@localhost:5432/postgres_db’)

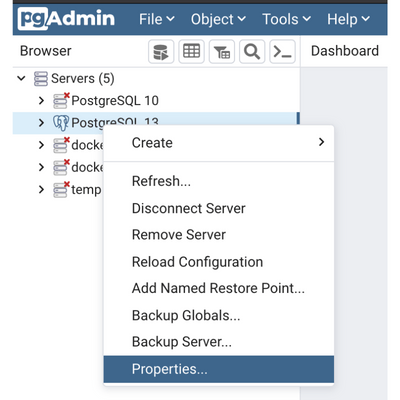

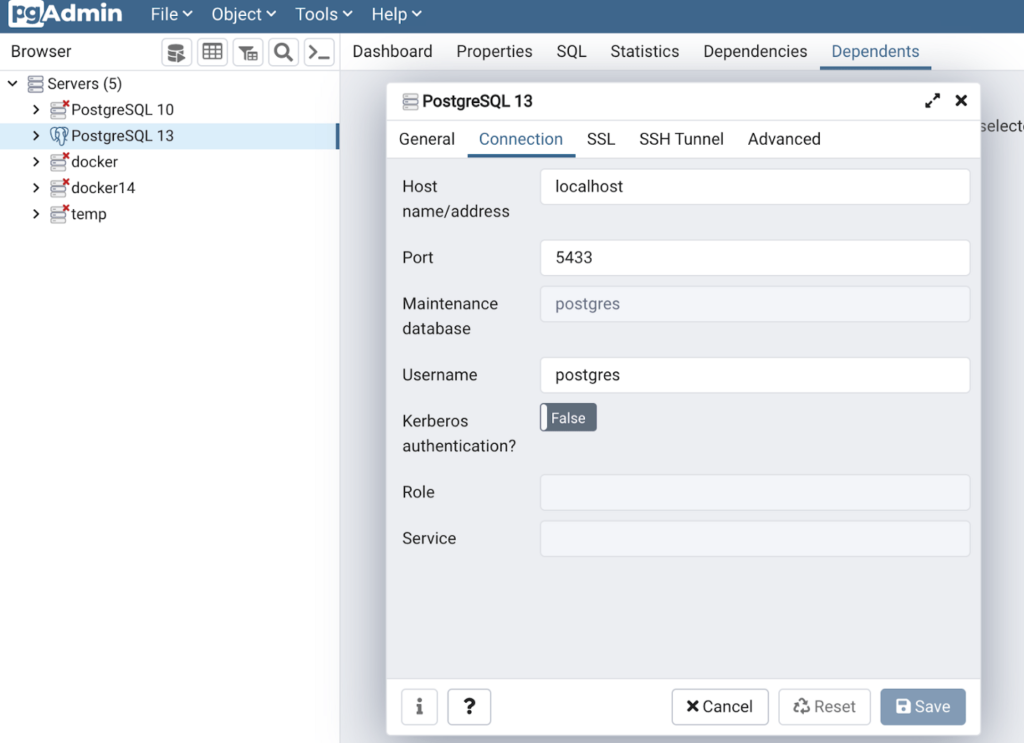

In this syntax, replace “your_password” with the password that you created during the installation of PostgreSQL. “5432” is the default PostgreSQL port number. You can check your port number from your configuration file, or from your GUI management tool such as PGAdmin. First, visit the Properties of your server from your PGAdmin or an equivalent tool such as DBEAVER.

Then, check the Connection tab, and port number. In the figure below, the port number is 5433.

Finally, replace “postgres_db” with “dvdrental” or the name of the database that you will access.

engine = sqlalchemy.create_engine('postgresql://postgres:mypassword@localhost:5433/dvdrental')

3- Install the Jupyter Notebook SQL-extension using either pip or conda on your console.

pip install ipython-sql

Then, load the sql extension:

%load_ext sql

4- Set up the connection:

%sql $engine.url

5- Now, we can use the magic command %sql to query the tables in dvdrental DB. One of the tables in the dvdrental database is the actors table with four columns: actor_id, first_name, last_name, and last_update. Let’s see the first and last names of actors ordered by the first name in ascending order.

%sql SELECT first_name, last_name from actor order by first_name limit 3* postgresql://postgres:***@localhost:5433/dvdrental

3 rows affected.

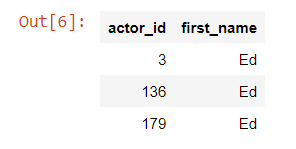

In another query, let’s see the actor_ids and first names of the actors whose name is Ed.

%sql SELECT actor_id, first_name from actor WHERE first_name = 'Ed'* postgresql://postgres:***@localhost:5433/dvdrental

3 rows affected.

If you like, you can write your query on multiple lines. For that, you can write your magic command with a double percentage sign since the magic function spans a cell rather than a line as below. Make sure you write only SQL commands in the cell, and nothing else.

%%sql SELECT actor_id, first_namefrom actorWHERE first_name = 'Ed'* postgresql://postgres:***@localhost:5433/dvdrental

3 rows affected.

Now that you’ve got the basics down, you can start writing more complex queries to your PostgreSQL database and analyzing your data with your preferred techniques in a Jupyter notebook.

You can find the code in the following GitHub link:

https://github.com/nihalsahan/DB/blob/main/ConnectPostgres.ipynb

. . .

To learn more about data science requirements, click here and read our another article.